Is 100 a lot?

"Is 100 a lot?"

My son asked me this the other day.

The answer? It depends.

Have you ever wondered what context really means? What is context switching? What does memory mean? And what do they all have in common?

To explain it in simple terms, imagine you’re having a discussion with someone and they ask you a question. The answer could have different meanings depending on the situation.

For example, when my son asked me: “Is 100 a lot?” I could have said yes or no, depending on 100 what.

100 years? Yes, that’s a lot.

100 seconds? Not really.

So the meaning of the same number changes depending on the context.

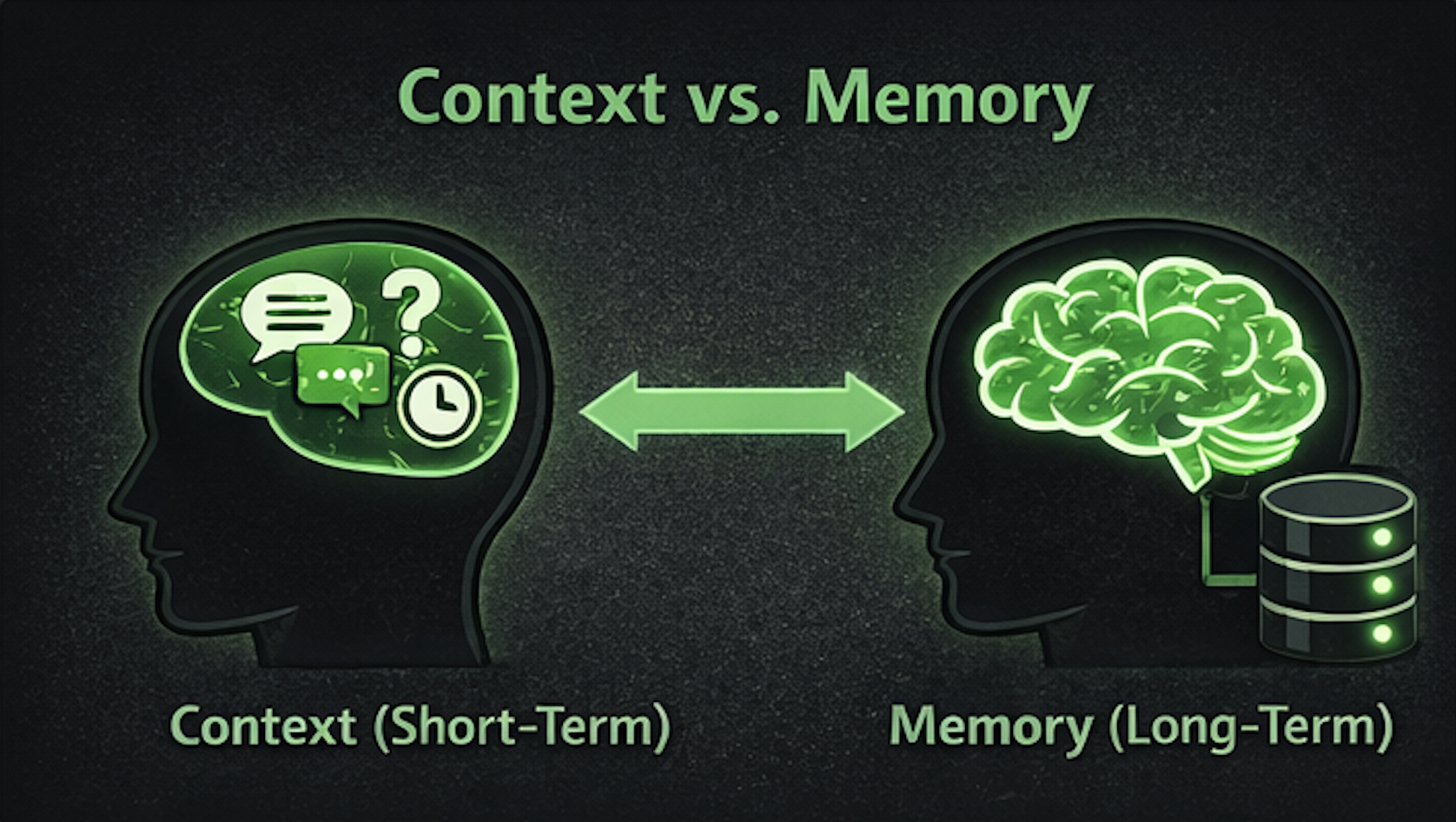

You can think of context as a kind of short-term memory that your brain uses during a specific discussion or situation.

Memory on the other hand is when your brain stores this info in a long-term sort of way. Our brains are able to store huge amounts of information and are able to recall them whenever needed (and sometimes even when it’s 100% not needed 😂)

Context helps us understand what’s happening now, while memory helps us make better future decisions.

So what is context switching?

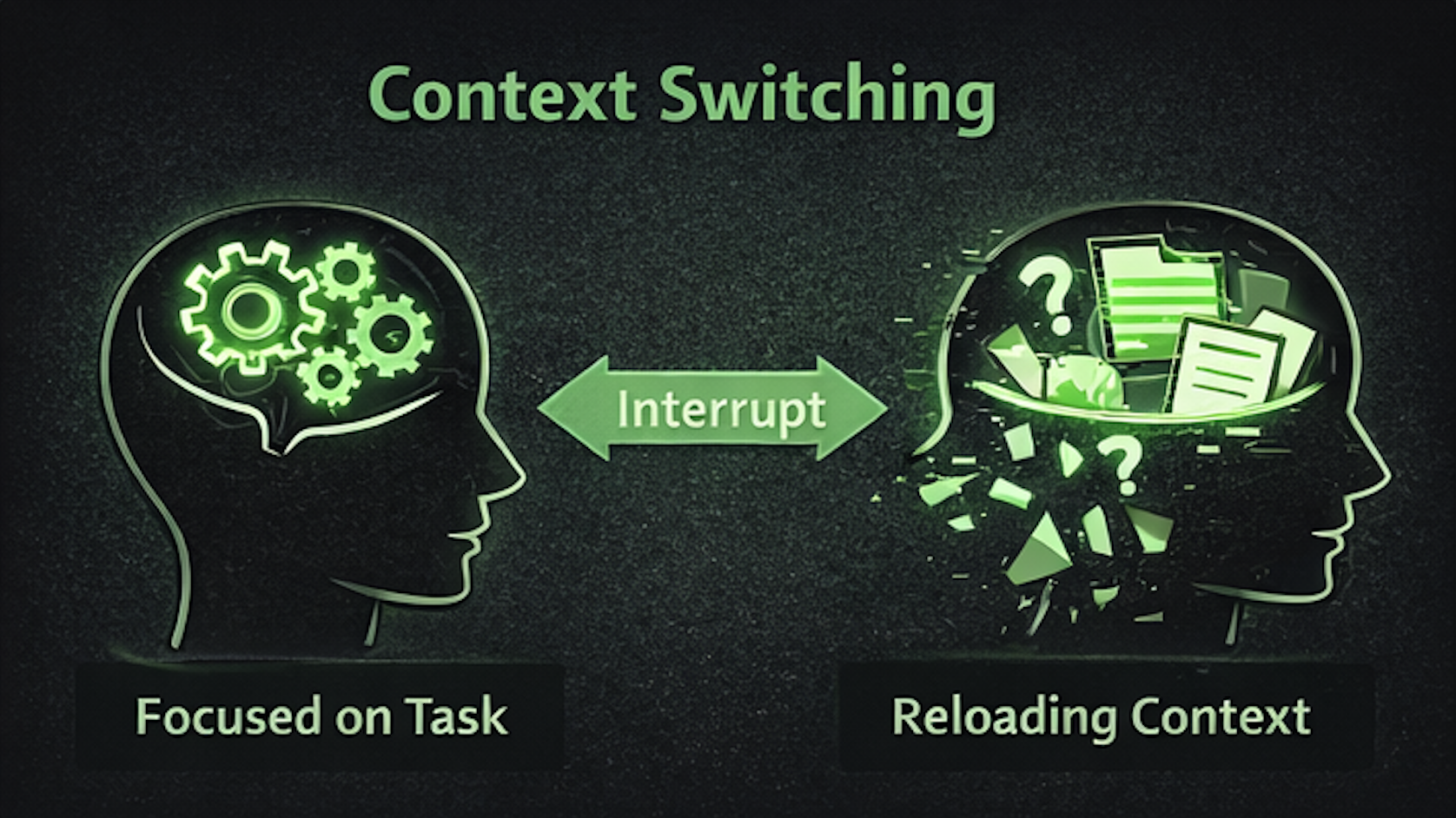

Even though our brain stores vast amounts of information, it can’t actively use them all at the same time.

When you're in a specific situation like working on a specific task, your brain focuses to recall the information relevant to this task and tries to relate all the inputs to the context of this task; basically creating a mental state.

- how the system works

- how different components interact

- which approach fits the solution the most

That mental state is the context.

If you want to switch to another task, or if someone interrupts you — an unplanned meeting, a message, a quick question - your brain has to switch contexts. It temporarily leaves one mental state and loads another so it can handle the new situation.

Later, when you return to the task, you don’t just continue where you left off; your brain has to reload that context again.

This is why context switching is expensive for developers. Every interruption can sometimes cost 15–30 minutes of productivity.

Interestingly, the same things are applicable for AI tools and agents we are all using nowadays.

When you ask an AI a question, the more context it has, the better answer it can give you. That’s why conversations are often better to produce better results than isolated single short questions.

Most AI apps behave this way. For each question or chat session, the more context you give it, the better result it will output.

Some AI apps allow you to hard-code some info to save it permanently; to act like the agents long-term memory; which it can use across all conversations/chat sessions.

Whether it’s AI or humans; context is key.